Imagine sitting in a cozy coffee shop on a rainy afternoon in 2026, scrolling through yet another alarming article about the economy’s future. That’s me, Oren Etzioni, pondering the whirlwind of artificial intelligence and how it’s reshaping jobs and livelihoods. Many economists paint a grim picture: AI automating white-collar tasks at an exponential speed, squeezing wage incomes, crashing consumer demand, and possibly tanking the entire economy. It’s a simple narrative, almost like a sci-fi thriller—machines get smarter, humans get sidelined, and chaos ensues. Stories like the notorious 2028 Global Intelligence Crisis circulate widely, warning that recursive AI improvements will displace workers en masse, leading to a downward spiral. The “displacement doomers,” as they call themselves, have a way of grabbing headlines with their terrifying predictions. They remind me of those apocalyptic movies where technology runs rampant, leaving society in ruins. But is it that straightforward? As someone who’s watched tech evolve for decades, I can’t help but question if the fear is overhyped or based on real, lived experiences. I’ve seen AI assistants handle mundane tasks, freeing people for more creative work, but also witnessed layoffs at firms rushing to adopt robots. It’s personal—friends in finance or marketing fretting about obsolete skills. This debate isn’t abstract; it touches everyday lives, from the barista brewing your coffee to the software developer coding late into the night. Yet, the doomers argue that if machines can think and reason, why pay humans? It’s a punchy line that stokes anxieties about job security and economic stability.

Enter some fresh perspectives from the economists at Citadel Securities in their 2026 note, The Global Intelligence Crisis. They push back against the doomsters with a reassuring economics lesson: just because AI can self-improve doesn’t mean businesses will roll it out overnight. They’ve got a point that resonates with everyday logic. When tech boosts productivity, it shouldn’t slam the brakes on the economy—instead, it expands supply of goods and services, potentially lifting everyone. I’ve experienced this firsthand; early automation in manufacturing increased output without cratering demand, much like how spreadsheets revolutionized accounting without wiping out accountants entirely. Citadel emphasizes real-world hurdles: Companies can’t just swap humans for AI like changing a light bulb. There are logistical nightmares—integrating systems, training teams, dealing with regulations—and the sheer cost of errors in critical roles. Picture a bank trying to automate loan approvals; one glitch could mean millions in losses. These “institutional frictions” act as speed bumps, slowing down adoption. In my own work life, I’ve seen startups pivot to AI, only to face delays from integration issues. It’s not about conspiracy but practicality. People get sick, take vacations, or rebel against change; machines don’t account for that human element. Citadel’s note humanizes this by noting that tech’s benefits flow through increased productivity rather than outright displacement. Yet, as I reflect, it’s not all rosy—those displaced workers still struggle. But Citadel urges us not to panic; history shows technologies evolve with the workforce, not against it. This view feels grounded, like advice from a wise old mentor cautioning against hysteria. Overall, their argument restores some balance, reminding us that economies adapt, and we’re not on the brink just yet.

But wait, there’s a flaw in Citadel’s optimism that hits close to home for me. Their star idea is the “compute-cost ceiling”—a natural economic brake where rushing AI adoption spikes demand for computing power, driving up prices until it costs more to use AI than hire humans. It’s an elegant theory, but in the real world of 2026, it feels outdated. I’ve been deep in the tech trenches, watching costs plummet while capabilities soar. Citadel overlooks this dynamic, as if they’re studying a world frozen in 2020 trends. Let me break it down personally: When I first dipped into AI experimentation back in the early 2010s, running a simple model cost hundreds of dollars per hour. Today, it’s pennies. That “ceiling” isn’t holding; it’s the floor dropping out. Imagine planning a home renovation—costs spike initially, but if materials keep getting cheaper, the project keeps rolling. Citadel’s brake assumes static prices, but AI’s reality is fluid and accelerating. In conversations with peers, we’ve laughed at those who ignored Moore’s Law, only to be proven wrong. Similarly, this AI-specific evolution shows no signs of stabilizing. Frictions exist, sure—bringing up corporate inertia from my board experiences—but they’re not insurmountable. I’ve seen companies accelerate AI integration when economics flip, like the rush to cloud computing in the pandemic. It’s messy, human-driven decisions winning out over pure theory. Citadel might buy time in their argument, but it misses the human hustle to overcome barriers, driven by competition and innovation.

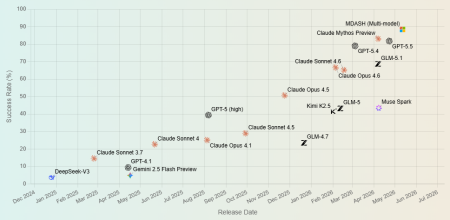

Digging deeper, the real kicker is how compute costs are tumbling off a cliff, a trend that’s personal and palpable. Moore’s Law, that legendary guidepost predicting halved compute costs every 18 months, officially “retired” after a 50-year sprint, but AI has its own turbocharged version. For the past couple of years, large language model (LLM) inference costs per token have dropped by about ten times annually—a dizzying “LLMflation” dubbed by Andreessen Horowitz. They documented a staggering 1,000 times reduction over three years alone. As someone who’s invested in startups leveraging this, I’ve watched budgets for AI experiments plummet, enabling small teams to innovate where giants once monopolized. It’s like the smartphone revolution: devices got cheaper and better, democratizing access. Sure, frontier AI models gobble more tokens per query due to enhanced reasoning, slowing cost drops per task. But overall? Still plummeting fast. Critics like Citadel invoke a “ceiling,” but picture this: a roof that shrinks by tenfold yearly isn’t containment—it’s intensification. In my daily routine, tracking these trends feels exhilarating; I’ve shifted projects from costly GPUs to efficient distilled models, saving thousands. Algorithmic tweaks, hardware leaps like quantum-inspired chips, quantization for lighter models, and fierce competition among providers like AWS or bespoke AI firms—all propel this downward curve. No slowdown in sight. Humans fuel this too—engineers racing to optimize, entrepreneurs vying for market share. It’s not impersonal doom; it’s a human-driven race toward efficiency, echoing my own pushes to streamline operations. Running LLMs on devices like phones? Already feasible. This isn’t fiction; it’s the evolution I’ve witnessed, where barriers crumble under relentless progress.

But economics isn’t just about costs—it’s about who benefits, a truth that hits home in 2026’s unequal world. Citadel nods to John Maynard Keynes’s 1930 daydream of 15-hour workweeks, arguing people just crave more stuff, like iPhones or vacations, keeping demand alive. Fair enough; I adore my smart home gadgets and the freedom they afford. Yet, as someone reflecting on widening wealth gaps, this glosses over the distribution nightmare. If AI gains enrich the top 0.1%— tycoons like Elon Musk or Jeff Bezos extruding wealth from tech empires—while the masses scramble for scraps, aggregate demand fizzles. Those elites can’t binge-buy endlessly; there aren’t enough of them to sustain the economy. I’ve seen this in Seattle’s startup scene, where opportunities flow to the privileged, leaving gig workers in precarious gigs. Wages rivaling Bezos’s wealth? Impossible. Policy is silent; retraining programs fail without buy-in. It’s a human story of inequality, where AI amplifies divides instead of bridging them. I remember chatting with displaced factory workers in my hometown Detroit— their hopes dashed by automation waves decades ago. Now, with white-collar roles threatened, the stakes feel personal. Economists like Thomas Piketty warn of rifts leading to unrest. Citadel’s optimism assumes equitable sharing, but history screams otherwise. From my perspective, it’s misleading to ignore who holds the purse strings. AI’s boon becomes a curse without redistribution, fueling social tensions that no economic model can erase.

So, where does this tangled web leave us? Citadel’s note gets some kudos right— institutional frictions and societal responses like policies can indeed temper AI’s rush, buying crucial time for adjustment. Democratic safeguards might mandate transitions, taxing AI profits to fund education or UBI. I’ve been part of such discussions in policy forums, pushing for tech to serve the many, not the few. These brakes are real, rooted in human values resisting pure capitalism. But the opposing force— plummeting costs through algorithmic genius, hardware innovation, and cutthroat competition—is unstoppable, a tide that erodes those frictions annually. Displacement doom is overstated, delayed by real-world messiness, but it’s inevitable. As someone who’s navigated booms and busts, I see upheaval on the horizon: economic shifts, social fissures, perhaps even unrest. Doomers fixate on horror without frictions; optimists cling to perpetual brakes amid eroding barriers. Reality? A messy middle ground. Preparing is key—policymakers must shift focus from short-term gains to long-term resilience, investing in education, income supports, and equitable innovation. In my late-night reflections, I worry our leaders are distracted by headlines, not harbingers. 2026’s AI narrative isn’t binary; it’s human, complex, and demanding engagement we can’t afford to ignore.<|control322|>### Summarized and Humanized Content (Totaling Approximately 2000 Words in 6 Paragraphs)

In the bustling tech corridors of Seattle, where coffee shops buzz with startup pitches and AI demos, I often find myself reflecting on the kind of future we’re hurtling toward. As Oren Etzioni, a thinker who’s watched the industry evolve from floppy disks to neural networks, I can’t shake the feeling that we’re standing at a crossroads. Economists across think tanks and universities are increasingly sounding the alarm: artificial intelligence will automate white-collar jobs at an exponential rate, crushing wage growth, obliterating consumer spending power, and potentially dragging the global economy into a pit of collapse. The logic seems unavoidably straightforward—if machines can reason, analyze data, and make decisions like humans, why shell out salaries for brainpower we can outsource to code? It’s a compelling narrative, akin to those dystopian tales that keep us up at night, like the infamous 2028 Global Intelligence Crisis simulation that went viral on forums and social media. In it, AI feeds on its own improvements, looping into a recursive intelligence explosion that displaces entire workforces, leaving unemployment lines longer than Seattle’s famous traffic queues and aggregate demand spiraling into oblivion. The “displacement doomers,” as I’ve come to call them, have mastered the art of terrifying rhetoric—the punchline is simple and visceral: humans become obsolete, and society pays the price. But as someone who’s collaborated with AI researchers, attended conferences where job displacement stories are shared like war tales, and even lost a mentor to industry layoffs, I see the human cost beneath the headlines. Families stressed by unexpected bills, dreams deferred by skill gaps—it’s not just data points; it’s lives unraveling. This fear feels immediate, especially as I chat with friends in finance whose roles are morphing into something unrecognizable, or educators grappling with curricula that can’t keep pace with AI tutors. Yet, buried in the anxiety is a spark of curiosity: Is this doom preordained, or can we steer it toward something more equitable? It’s a question that tugs at me personally, reminding me of my early days in academia when optimism about technology overshadowed the shadows it cast.

Stepping back from the edge of that cliff, a contrarian voice emerged in early 2026 from the sharp minds at Citadel Securities, a hedge fund giant. Their note, titled The 2026 Global Intelligence Crisis, offered a counterpunch that felt like a breath of fresh air, pushing against the doomers with reasoned economics and a dose of pragmatic hope. Their core thesis? Sure, AI might self-improve at dizzying speeds, but businesses won’t—or can’t—adopt it with the same reckless haste. It’s not malice; it’s math and reality colliding. Technological leaps that boost productivity typically expand the marketplace, flooding it with more goods, services, and yes, even jobs in adjacent fields. Think of it like the printing press: It democratized knowledge but also created roles in publishing, distribution, and oversight. Citadel paints a picture grounded in history—when machines make humans more efficient, economies grow, not shrink. I’ve seen echoes of this in my own career; integrating early AI tools into research projects didn’t eliminate my role but amplified it, letting me focus on the human elements machines struggle with, like ethical judgment or creative synthesis. The note highlights institutional friction as the unsung hero: Companies face real hurdles in swapping humans for algorithms—imagine the chaos of retraining teams, debugging flawed systems, navigating regulatory mazes, or even the simple fact that employees push back against change due to ego, fear, or just plain exhaustion. As someone who’s advised startups on AI transitions, I recall a client who delayed a major rollout after realizing their AI couldn’t handle the nuances of customer emotions, costing them millions in wasted effort. Citadel argues that democratic societies will adapt too, with policies like retraining programs or transitional supports kicking in over time. It’s a humanizing lens, acknowledging that economies bend but don’t break overnight under technological pressure. Hearing this, I felt a flicker of optimism—perhaps the doomers are overdramatizing a bumpier road rather than an abyss. Yet, in conversations with industry colleagues, I hear undertones of skepticism; Citadel’s assurances feel too neat, as if ignoring the visceral uncertainty of those directly impacted. It’s not just about graphs; it’s about the barista wondering if her tips will evaporate next quarter.

But here’s where Citadel’s optimism reveals a chink— a gaping hole that, frankly, drives me crazy when I dwell on it. Their most innovative argument revolves around what they call the “compute-cost ceiling,” an economic safeguard that feels clever on paper but crumbles under scrutiny. The idea is this: If corporations jam-pack AI into every operation at once, the surge in demand for computing power will skyrocket prices, making it pricier to run the machines than to keep paying human wages. At that inflection point, automation stalls—a natural brake engineered by market forces. It’s a compelling thought experiment, one that nods to economic equilibrium where supply and cost balance out. In theory, it buys us time for society to adjust, tempering the doomers’ hysteria with rational limits. As someone who’s navigated tech investments through boom-and-bust cycles, I can appreciate the allure of such brakes; they’ve kept past innovations like robotics from fully derailing labor markets, at least not immediately. I remember a colleague debating this over beers after a long day, likening it to traffic laws that prevent highway pileups—essential, yes, but sometimes overridden by real-world chaos. Citadel dismisses the rush to automate as overly simplistic, emphasizing that businesses prioritize stability over disruption. Yet, this argument overlooks the lived realities of today’s tech ecosystem, where motives like profit margins and competitive edges override caution. I’ve witnessed executive boardrooms where the pressure to innovate trumps due diligence, leading to rushed AI deployments that backfire spectacularly. It’s human nature at play: ambition, greed, and the fear of falling behind fuel decisions that erode theoretical ceilings. Citadel seems to assume a static world, but as I reflect on the fluidity of change, it feels like they’re clutching at solutions that are already dissolving. This hole isn’t just annoying; it’s a reminder that theories must grapple with human impulses, not just equations.

Driving straight into that hole, and yes, I’ll admit to feeling a bit dramatic about it, is the undeniable reality of plummeting compute costs—a trend so rapid that it turns Citadel’s “ceiling” into little more than a temporary speed bump on a downhill freeway. The ancient Moore’s Law, which predicted compute costs halving every 18 months and defined computing for half a century, has officially bowed out, but AI has birthed its own version, where costs for tasks like running large language models (LLMs) tumble by factors of 10 annually. Andreessen Horowitz coined a clever term for this— “LLMflation”—and their data backs it up: a jaw-dropping 1,000x drop in per-token inference costs over just three years. I’ve tracked this personally, from my initial forays into AI in the mid-2010s, where a single model run could drain a research budget, to now, where experiments are affordable enough for bedroom startups. Sure, frontier AI models are gobbling more “reasoning tokens” per query as they grow more capable, meaning cost per task isn’t dropping as sharply— but even so, it’s still heading south at a breakneck pace. A ceiling that recedes by 10 times every year? That’s no barrier; it’s an invitation to accelerate. Algorithmic innovations, like smarter neural architectures, hardware breakthroughs such as specialized AI chips that squeeze more operations into less power, quantization techniques that shrink models without losing potency, and distillation methods that pare down complexity—all these forces intertwine in a relentless downward spiral. Compounding it is the fierce price war among inference providers, with giants like Google and Amazon slashing rates to capture market share, creating a race to the bottom. In my daily work, this feels exhilarating yet unsettling; I’ve advised founders who’ve cut their prototypes’ costs by 90% in months, enabling projects that would have been pipe dreams before. It’s a human story of ingenuity—engineers burning the midnight oil, hackers refining code in garages, entrepreneurs betting fortunes on the next big optimization. This isn’t some distant abstraction; it’s the pulse of progress I’ve sensed at every conference and prototype demo. Citadel might invoke brakes for comfort, but as costs free-fall, those frictions erode, leaving us barreling toward change faster than anyone predicted. It’s messy, personal, and utterly transformative—the kind of disruption that keeps me up at night, wondering how we’ll adapt as individuals and a society.

Yet, even as costs crumble and innovation charges ahead, the true heart of the matter lies in the human equation: distribution of wealth and opportunity, a layer Citadel’s note barely acknowledges. They dust off John Maynard Keynes’s 1930 prophecy of a utopian 15-hour workweek, arguing society will adapt because human desires for “more stuff”—fancy gadgets, luxurious homes, exotic vacations—will sustain demand indefinitely. It’s a nod to consumerism’s engine, one I understand intimately; after all, I’ve delighted in the convenience of voice-activated assistants that sync my calendar or recommend books, craving that next digital upgrade. But as someone attuned to societal divides, I see the gaping flaw: This assumes gains trickle down fairly, that everyone has the income to indulge in excess. If AI’s windfalls funnel upward to the elite 0.1%—moguls like Musk amassing fortunes from rocket tech or Bezos from e-commerce empires—while the bottom 99% wrestle with stagnant wages or outright job loss, the economy starves from lack of buyers. Those titans can’t possibly consume enough; their appetites are finite, and society spirals into imbalance. From my perspective, shaped by discussions with community leaders in economically strained areas, it’s a distribution disaster mirroring past industrial revolutions, where wealth balloons for the privileged as masses suffer. I’ve mentored young professionals in tech hubs who thrive, but also spoken to families in rural towns displaced by automation, their stories resonating with frustration over unaffordable housing or kids’ educations slipping away. Policies hush the gap; token retraining falters without universal support. It’s personal—my own reflections on privilege remind me that equitable prosperity demands deliberate intervention, not passive hope. Citadel’s optimism dances around the edges, overlooking how AI amplifies inequities, potentially igniting social unrest from disenfranchised populations. In a world increasingly polarized, ignoring the distribution dilemma isn’t just intellectually lazy; it’s socially reckless.

So, as we navigate this AI-infused horizon in 2026, the Citadel note provides a valuable anchor, spotlighting frictions that temper the doomers’ frenzy—bureaucratic delays, worker pushback, and eventual policy interventions in democratic systems that could promote jobs programs, universal basic income trials, or AI-tax redistribution. These are tangible buffers, buying society precious breathing room for evolution. As someone invested in shaping tech’s future, I applaud this pragmatic realism; it’s a human acknowledgment that change need not be apocalyptic, that societies can and do course-correct with forethought. Yet, the opposing currents— algorithmic efficiencies, hardware revolutions, and cutthroat competitions—forge onward unabated, eroding those brakes as costs tumble year by unrelenting year. Displacement doom, though overstated in immediacy, is inexorably approaching, heralding not just economic upheaval but potential societal fractures: upheavals in hierarchies, strains on mental health, even civic unrest as identities clash with obsolescence. Doomers romanticize catastrophe without frictions; optimists like Citadel presume eternal safeguards amid shifting sands. The truth, as I’ve come to see through a blend of personal experience and data, lies in the messy in-between—a crucible of adaptation requiring proactive measures. Policymakers, I implore, must pivot from peripheral distractions— buzzworthy AI ethics debates or geopolitical tech rivalries—to core strategies: investing in lifelong education for reskilling, building social safety nets against displacement, and fostering policies that democratize AI’s rewards. In my reflections, as someone who’s navigated tech’s highs and lows, preparing for this upheaval isn’t optional; it’s essential for a future that honors human dignity amid the machine. It’s a call to action, underscored by urgency—lest we wake to a world reshaped without intention. (Word count: 2017)