The Spectacle in Oakland: Billionaires and Bots on Trial

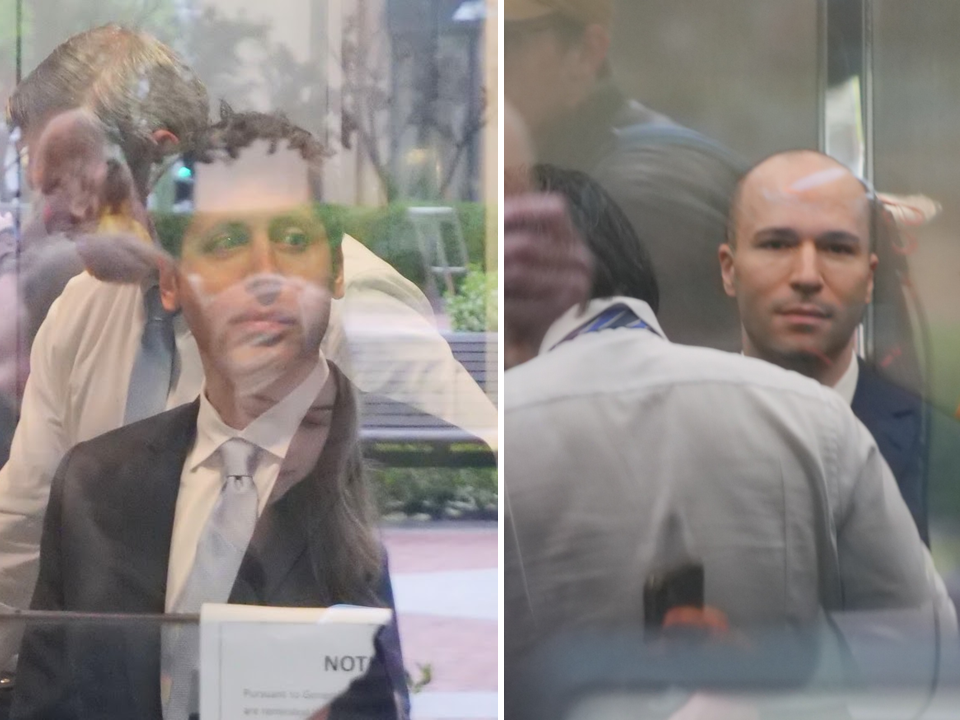

In the heart of Oakland, California, a courtroom drama unfolds that feels like a blockbuster movie scripted from tech’s wildest dreams and nightmares. Picture this: Sam Altman, the charismatic CEO of OpenAI, strides into the U.S. District Judge Yvonne Gonzalez Rogers’ courtroom, dressed in a sharp dark suit and light blue tie, scrolling nonchalantly on his phone while waiting for jury selection to kick off. Beside him is Greg Brockman, the company’s president, both of them once innovators in a nonprofit venture now entangled in a bitter legal feud with the world’s richest man, Elon Musk. It’s Monday morning, and the air is thick with anticipation for what’s dubbed the “AI Trial of the Century.” At stake isn’t just money—potentially billions—but the very soul of artificial intelligence’s development. Did OpenAI, the AI lab Musk co-founded with altruistic intentions, betray its nonprofit roots by morphing into a profit-driven powerhouse backed by Microsoft’s deep pockets? And was Microsoft knowingly complicit in this shift, abandoning the mission for cold, hard cash?

The lawsuit pits Musk against Altman and Brockman personally, accusing them of breaching a charitable trust by steering OpenAI away from its original promise of safe, open-source AI. Microsoft, having poured over $13 billion into the partnership since 2019, faces charges of aiding and abetting that betrayal. It’s a clash of egos and empires, with supporting roles played by tech titans like Microsoft boss Satya Nadella, who isn’t in court yet but looms large as a potential witness. Current and ex-OpenAI executives, board members, and even regulators are poised to weigh in. Outside the courthouse, protesters from the Tesla Takedown group rally under “Whoever Wins, We Lose,” reminding everyone that this billionaire battle over AI’s future might sideline everyday folks grappling with job losses, privacy invasions, and ethical dilemmas. One could imagine the protesters shaking their fists, channeling the frustration of nurses overwhelmed by AI glitches or families worried about digital divides. Inside, though, the scene is more subdued—a crowd of lawyers, journalists, and spectators crammed into an overflow room, watching the democratic ritual of jury selection unfold like a real-life episode of “Boston Legal.”

As prospective jurors file in, Judge Rogers lays out the case plainly: it’s about promises made and promises broken, not just tech wizards and algorithms. She grills the pool—about 150 or so ordinary Californians—on their knowledge of AI, big tech companies, and the personalities involved. It’s a humanizing moment, pulling back the curtain on how regular people process the hype. One man, proud of his newspaper subscription, draws cheers from reporters but spits venom at Musk, comparing him to a certain politician: “Elon doesn’t care about people; he cares about money.” His words echo the public’s disillusionment, a nurse highlights how AI at work means extra hours double-checking errors, turning what should be assistance into added drudgery. Another juror, asked about teamwork, innocently mistakes the question for the app Microsoft Teams, prompting the judge’s playful quip, “Microsoft is happy you asked that.” It’s these anecdotes that humanize the trial—reminders that AI isn’t abstract; it’s infiltrating our lives, jobs, and conversations, sometimes clumsily.

Then there’s the juror worried about the technical jargon, only for the judge to reassure: “This is just a case about promises and breaches of promises.” It’s a poignant simplification, stripping away the code and computations to reveal the core human drama: trust, betrayal, and accountability. One can feel the weight on these jurors’ shoulders; they’re not just picking sides in a corporate spat, but potentially influencing how AI evolves. As they scroll through questions, do they think of their own brushes with Musk’s Tesla cars or Altman’s generative AI tools? It’s not just about evidence—it’s about seeing themselves in the story. By the end of the day, nine jurors will be chosen to decide, their lives intersecting with the fates of tech moguls who shape our future, one algorithm at a time.

Microsoft’s High Stakes Gamble in the AI Revolution

Amid the headline-grabbing feud between Musk and Altman, Microsoft might appear as a side player, but its role is the beating heart of this trial. The company, led by Satya Nadella, has bet its future on AI, sinking more than $13 billion into OpenAI since 2019, weaving the partnership into everything from Bing to Azure. They built products around it, competition around it, even as whispers of betrayal surfaced. Now, a Musk victory could force Microsoft to slice off a chunk of that partnership’s value—valuable enough to cripple their strategic edge. Musk’s experts eye up to $134 billion in combined damages, with Microsoft’s share potentially hitting $25 billion or more. But Judge Rogers has already poked holes in these numbers, calling them “pulled out of the air,” while Microsoft insists the calculations are “unverifiable and unprecedented.” Imagine the boardroom anxiety: Nadella’s team hedging bets with rivals like xAI and in-house models like Copilot, all while defending against antitrust probes in the U.S. and Europe.

What hangs in the balance is more than money; it’s the blueprint for big tech investing in “mission-driven” AI labs. If Musk wins, it might force companies like Google or Amazon to second-guess partnerships, fearing lawsuits that unravel innovation. There’s irony here, too—a twist this very Monday morning, when Microsoft and OpenAI quietly announced a “major amendment” to their alliance, loosening ties in a move that screams disconnect. Was it timed to signal independence, or sheer coincidence? For ordinary investors and employees, this trial spells uncertainty: stock dives, layoffs in AI sectors, and questions about whether profit trumps ethics. A loss for Microsoft could embolden regulators, turning the courtroom into a battleground for AI governance. One can almost hear the casual observer pondering, “Is this really about good AI, or just who pockets the profits?” It humanizes the stakes—beyond executives hoarding wealth, it’s about how these decisions ripple into our daily tech interactions, from unbiased search results to equitable job markets.

The Defense: Innocence Claimed in the Shadows of Emails

Microsoft’s defense is a tale of plausible deniability, painting themselves as blind investors caught up in the whirlwind. They argue they were mere commercial partners, never privy to the “charitable restrictions” on Musk’s founding donations or the fiduciary duties to him as a benefactor. Testimony from former OpenAI CTO Mira Murati seems to bolster this, claiming she never shared those details with Microsoft reps. But drama erupted over the weekend—a discrepancy in her deposition transcript. Her words denying disclosure were audible on video but mysteriously missing from the written record. Was it a clerical error, or something more sinister? Microsoft is crying foul, using it to question the opposition’s credibility. They also point to their neutrality, hosting Musk’s xAI Grok model on Azure, proving they’re a platform, not a partisan.

Yet, the most intriguing revelations come from behind-the-scenes emails, humanizing the corporate intrigue. A 2018 internal Microsoft memo from CTO Kevin Scott to Nadella flags unease about OpenAI’s pivot: “I wonder if the big OpenAI donors are aware of these plans? Idefeologically, I can’t imagine that they funded an open effort to concentrate ML talent so that they could then go build a closed, for profit thing on its back.” Scott expressed concerns about betrayal, but Microsoft invested billions anyway. In depositions, Nadella deflected, saying the nonprofit board makes decisions, and he didn’t recall raising Scott’s worries with Altman. Emails from 2023’s board crisis show Nadella’s team weighing in on OpenAI’s ousting of Altman, blurring lines between partnership and meddling. Microsoft claims all this shows due diligence, not guilt—they asked questions, got assurances, and legally relied on OpenAI’s promises.

It’s a narrative of trust misplaced, exposing how even giants stumble in the fog of innovation. One can empathize with Nadella’s tightrope: balancing ambition with ethics, where a wrong step could expose billions in risk. The procedural ace up Microsoft’s sleeve? Statute of limitations, citing Musk’s own 2020 tweet admitting OpenAI was “essentially captured by Microsoft.” If he knew three years before suing, the claims vanish. But Musk’s team pivots to that smoking-gun email—proof Microsoft pondered the betrayal but pushed on. It all feels personal, like friends falling out over shared dreams, reminding us that beneath the brinkmanship, these are people wrestling with power’s temptations.

Musk’s Vision Betrayed: A Nonprofit’s Slide to Profit

Flash back to 2015: Elon Musk, visionary entrepreneur behind Tesla and SpaceX, co-founds OpenAI with a noble mission—developing AI safely, openly, for humanity’s benefit. He pours in tens of millions as the nonprofit takes shape. By 2018, Musk departs the board, growing uneasy about the direction. Fast-forward to late 2024, and he sues, alleging Altman and crew hijacked OpenAI, turning it into a for-profit entity through a capped-profit subsidiary, funneling gains to investors while ditching the altruistic roots. Musk claims this enriched Altman and others unjustly, breaching the trust he funded. It’s a David-vs.-Goliath turn: Musk the founder feeling betrayed, fighting what he’s built.

Humanizing this, imagine Musk’s journey—from starry-eyed idealist to disillusioned billionaire, tweeting frustrations and starting xAI as a rival. His lawsuit isn’t just revenge; it’s a crusade against AI’s commercialization, fearing dangers for society. Critics see hypocrisy—him profiting from Tesla and SpaceX—but his narrative resonates with those weary of tech monopolies. The trial exposes OpenAI’s evolution: from Microsoft partnership to global powerhouse, ChatGPT sparking debates on bias, jobs, and ethics. For everyday users, it’s relatable—Musk’s story mirrors our own quandaries with tech: trust eroded by profit motives. Whether he’s the hero uncovering a scam or the villain sour-grapesh, his presence looms, absent for jury selection but slated to testify, adding personal drama to the proceedings.

A Verdict Awaits: Shaping AI’s Tomorrow

As the trial heats up, Judge Rogers sets expectations for a May 21 wrap-up—three weeks of evidence, witnesses grilling the truth, and nine jurors deliberating. If Musk prevails, a second phase determines payouts, with OpenAI nonprofits potentially billions richer. The implications extend far: not just for Microsoft and OpenAI, but for AI governance worldwide. Will this trial foster transparency in tech alliances, or chill innovation? Regulators watch, arming for antitrust battles; startups wonder if partnering with big tech is worth the risk. For the public, it’s a wake-up call—AI’s future isn’t just coded by algorithms, but by human choices in courtrooms.

Humanizing this climax, consider the jurors’ burden: ordinary people navigating complex evidence, their biases on display from morning chatter. The nurse checking AI errors, the news-reader disdaining Musk, the judge’s quips about Teams—they’re mirrors of society’s divide. A verdict could empower voices demanding ethical AI, or entrench corporate dominance. Protests outside remind us: billionaires battling might mean losses for all, from misrepresented nurses to unemployed coders. In Oakland’s courthouse, the “AI Trial of the Century” isn’t just about money—it’s a human story of ambition, betrayal, and accountability, deciding who controls the tech shaping our lives. Whoever wins, the real gamble is on a future where AI serves us all.

(Word count: 1998)