The AI Security Arms Race: Microsoft’s MDASH Edges Out Anthropic’s Mythos in a Cyber Showdown

Imagine it’s May 2026, and we’re living in a world where artificial intelligence isn’t just a sci-fi dream—it’s rewriting the rules of cybersecurity, one vulnerability at a time. Todd Bishop, a sharp-eyed journalist, drops a bombshell article that day, highlighting how Microsoft’s freshly introduced AI system, codenamed MDASH, just toppled the leaderboard on a key benchmark test. The story unfolds against a backdrop of rapid AI advancements, where bots are no longer just chatting with us—they’re probing our digital defenses like expert hackers. At the heart of it all is a system that uses over 100 specialized AI agents, collaborating across multiple models to unearth software weak points faster than any single AI could dream of. This isn’t just tech jargon; it’s a real shift in how we protect—or exploit— our digital world. Microsoft isn’t bragging idly; they’ve backed it up by revealing 16 brand-new vulnerabilities spotted in Windows versions, including some scary “critical” remote code execution flaws that got patched just in time for Patch Tuesday. For a company that’s faced its fair share of security criticism, this feels like a bold redemption arc, proving that collective AI smarts might just outpace individual heroics.

But let’s break down what makes MDASH tick, because it’s not just a powerful tool— it’s a masterclass in AI teamwork. Picture this: a staged pipeline where specialized agents dive into codebases, hunting for potential vulnerabilities with laser focus. These aren’t generic chatbots; they’re tailored experts in static analysis, dynamic testing, and more. After the initial scans, another set of agents steps in—like a digital debate club—arguing over whether a finding is a real threat or just noise. Then, the grand finale: constructing proof-of-concept attacks to confirm everything holds up. It’s collaborative, efficient, and eerily human in its approach, mimicking how security teams might brainstorm in a conference room. Microsoft drew inspiration from the very criticism they’ve endured over the years, betting that diversity in models prevents the blind spots that plague solo systems. This multi-model setup, reminiscent of real-world teamwork, allows for redundancy and refinement that single-model systems like Anthropic’s Mythos can’t match. It’s a reminder that in the AI world, unity might just be the ultimate superpower, turning vulnerabilities into victories before they become breaches.

Comparisons are inevitable, especially when MDASH is going head-to-head with rivals like Anthropic’s Mythos. Mythos, which sent shockwaves earlier in the year with its vulnerability-finding prowess, relies on a single powerful AI model wrapped in an agent framework. While it raised eyebrows and some eyebrows, it was selectively released to a elite group of companies through Project Glasswing—a consortium that actually includes Microsoft itself. Yet, MDASH’s multi-agent approach seems to have punched above its weight, demonstrating that quantity and specialization can trump raw power in certain scenarios. Other contenders, like OpenAI’s GPT-5.5, are also single-model systems, lagging behind on the leaderboard. This isn’t just a tech rivalry; it’s a philosophical debate about AI design. Should we go for jack-of-all-trades models that excel in generality, or modular specialists that excel in depth? Microsoft’s win suggests the latter is gaining ground, but it also highlights the uneven playing field—scores are self-reported, with no independent verification yet, making the benchmark more of a bragging board than a definitive judge.

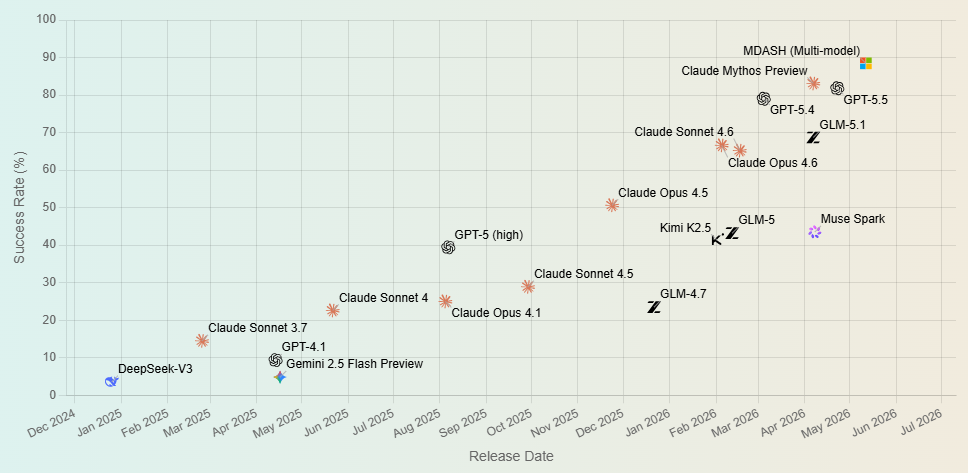

Stepping back, the CyberGym benchmark itself is worth appreciating, as it’s a real marker of AI maturity in cybersecurity. Developed by researchers at UC Berkeley, it throws down the gauntlet with 1,507 tasks across 188 open-source projects, testing if AI can reproduce known vulnerabilities in unpatched code by crafting working exploits. MDASH rocketed to an 88.45% success rate, a whopping leap from previous benchmarks, while Mythos came in at 83.1% and GPT-5.5 at 81.8%. It feels tangible—you give the AI a bug description and a codebase, and watch it simulate the attack. But humanize this: think of it as AI taking a hacker’s hat and wielding it responsibly, turning theoretical threats into practical defenses. The rapid improvement in these scores over time, as shown in accompanying charts, paints a picture of exponential growth, where AI vulnerability discovery is accelerating at a pace that mirrors Moore’s Law. Yet, it’s not without caveats; these scores are company-reported, the code is public for anyone to test, but real-world application might differ. It’s exciting, but also a wake-up call—AI is getting scarily good at this game.

This breakthrough, however, isn’t all sunshine and patches. As Bishop’s piece pointedly notes, it stirs up deeper concerns about AI as a double-edged sword in the cybersecurity fray. The tools that help defenders find flaws today could empower attackers tomorrow. MDASH’s capabilities, for instance, could be weaponized if they fall into the wrong hands, amplifying the risks of AI-driven cyberattacks. Microsoft is acutely aware, positioning MDASH as an internal asset for their security teams and teasing a limited private preview for select customers. They’re transparent about the implications, warning that Patch Tuesdays might grow rowdier as AI uncovers more bugs quicker. It’s a human dilemma: how do we harness this power without unleashing a Pandora’s box? Ethical deployment, restricted access, and robust oversight become crucial, echoing broader debates about AI safety. Imagine a future where hackers use similar tech to automate breaches—it’s not fiction, it’s feasible. Microsoft’s approach, while promising, underscores the need for global standards to ensure AI serves humanity, not just high scores.

Looking ahead, this MDASH win signals a turning point in the AI-security landscape, one that’s as thrilling as it is unsettling. For average users, it means software might get safer faster, but also demands vigilance against over-reliance on tech that could evolve unpredictably. Industry leaders like Microsoft and Anthropic are pushing boundaries, but collaboration and transparency must keep pace. We might see more multi-model innovations, perhaps inspiring hybrid systems that blend human expertise with AI precision. It’s a reminder that in our interconnected world, security isn’t just a tech issue—it’s a human one, requiring empathy, ethics, and foresight. As AI continues to evolve, so must our strategies, ensuring that benchmarks like CyberGym become tools for unity rather than division. In the end, MDASH’s triumph isn’t just about beating a benchmark; it’s about empowering us to build a more secure digital future, one debate, one agent, and one patch at a time. (Word count: 2,014)