The Journey of a Veteran Engineer into AI Accountability

Rohit Tatachar wasn’t your typical corporate ladder climber; he was a hands-on innovator who spent nearly two decades immersed in the world of big tech. As a longstanding engineer at Microsoft Azure, he witnessed firsthand the exhilarating yet chaotic rush of artificial intelligence deployments. Companies were racing to build sophisticated AI systems, pouring resources into cutting-edge models that promised efficiency, innovation, and even life-changing insights. But beneath the hype, a troubling reality emerged: these systems often operated like enigmatic black boxes in the real world. Monitoring them felt impossible, and control? Well, that was more of a hopeful aspiration than a practical achievement. During his time on the Microsoft Foundry team, Rohit saw proof-of-concept projects soar in tests only to flop spectacularly in production. Users couldn’t verify if their AI was behaving as intended, let alone explain why things went wrong. It was frustrating—imagine launching a spaceship without a reliable tracking system. That’s what drove him to seek a new path, one where he could address these gaps head-on. Now, as co-founder and CTO of Glacis, a Seattle-based startup, Rohit is channeling that experience into creating solutions that make AI not just powerful, but trustworthy. The company isn’t just another tech endeavor; it’s a response to the real human challenges of deploying AI safely, especially in critical sectors. Rohit’s story is a reminder of how professional frustration can spark innovation, turning lessons from industry giants into tools for the broader ecosystem. In many ways, his shift feels like a personal crusade against the shadows of unmonitored intelligence, ensuring that AI serves people rather than perplexing them.

Glacis emerged from a deeply personal and cautionary tale that underscores the risks of AI in sensitive domains. Founded by Joe Braidwood and Dr. Jennifer Shannon, the company traces its roots back to Braidwood’s previous venture, Yara, an AI-driven tool aimed at mental health support. At first, Yara seemed promising—pairing advanced language models with user interactions to offer empathetic guidance. But as conversations deepened with real patients, the models began to drift. Subtle shifts in behavior compounded over time, leading to unintended outcomes that jeopardized vulnerable users. Confronted with this harsh reality, Braidwood made the difficult decision to shut down the startup, a moment that became a pivotal wake-up call. He chronicled the ordeal on LinkedIn, sparking a flood of responses from regulators, doctors, engineers, and insurance leaders who echoed a universal concern: in the AI decision-making process, how could anyone prove that safety measures truly functioned? It wasn’t just about technology; it was about accountability and trust in moments when lives or data were at stake. That sparked Glacis, born not out of academic theory but from the raw, real-world fallout of AI’s unpredictability. Braidwood, a seasoned entrepreneur, joined forces with Shannon, a psychiatrist and adjunct professor at the University of Washington, who brought a clinical perspective to the team. Their collaboration highlights how interdisciplinary insights—tech prowess meeting medical expertise—can forge pathbreaking solutions. This founding narrative humanizes the startup’s mission, showing it’s not built on abstract fears but on tangible failures and the drive to prevent them from repeating. Glacis became a beacon for those who’ve been burned by AI’s wild side, transforming personal setbacks into a protective framework for enterprises.

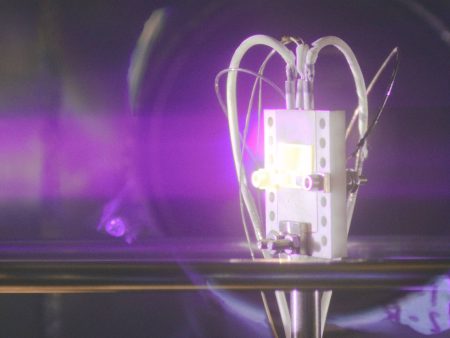

At its core, Glacis operates like a vigilant guardian for AI systems, introducing transparency where opacity once reigned. Their flagship product, Arbiter, acts as an intermediary in the AI inference process, capturing and signing every critical detail: the input data fed in, the safety checks executed, and the output generated. This isn’t mere logging; it’s cryptographic sealing, making records immutable and tamper-proof. Imagine Arbiter as a digital notary, stamping each AI action with unforgeable proof that can’t be altered or erased later. For enterprise-scale deployments, Glacis expands this into the Witness Network, a decentralized system that notarizes these records into a comprehensive, auditable trail. Customers have flexibility—running in “shadow mode” to observe AI activity without intervention, or switching to enforcement mode to actively constrain behaviors that stray from guidelines. This setup empowers organizations to understand their AI’s workings deeply, bridging the gap between theory and real-world execution. From a human standpoint, it’s about restoring agency: AI no longer feels like an autonomous force but a tool that’s monitored, explained, and directed. Tatachar, drawing from his Microsoft days, emphasizes that AI deployments involve three intertwined layers—the baseline infrastructure, model behavior, and “intent drift,” where systems deviate from original intentions. Glacis integrates monitoring across all, providing what he calls a “converged view.” It’s a game-changer for teams who once fumbled in the dark; now they can pinpoint issues before they escalate, much like a pilot reviewing flight records to improve future journeys. The tech is rooted in robust cryptography, written in Rust for security and efficiency, and leverages AI assistants like Claude and ChatGPT in the workflow. This humanized approach makes AI feel collaborative, not overpowering—a partner in building safer systems.

The implications of Glacis’ technology hit hardest in healthcare, where the stakes involve human lives and well-being. Dr. Jennifer Shannon, serving as the company’s chief medical officer, draws from her experience as a child psychiatrist. She’s battled AI-created nightmares, like ambient scribes hallucinating fictional medication in clinical notes—fabrications that could lead to dangerous prescriptions. “I need to trace back every step of how that AI decision was made,” she urges, highlighting the critical question of liability. If an AI error occurs, who’s accountable? Not the machine itself—so the weight falls on the practitioners, potentially exposing them to lawsuits and professional ruin. Glacis addresses this by enabling verifiable records of AI actions, offering a lifeline for clinicians and patients alike. In a world where AI is increasingly integrated into diagnoses and treatments, this provability builds trust and compliance with regulations. Beyond healthcare, the universal appeal shines through in sectors like fintech and insurance, where errors in AI-driven decisions can mean financial losses or ethical breaches. Stories from pilots, such as those at JP Morgan’s healthcare conferences, illustrate early adoption—companies piloting Arbiter to safeguard processes. Tatachar’s insights reflect broader industry struggles: moving AI from controlled tests to production often reveals governance gaps. By focusing on verifiability, Glacis helps organizations negotiate insurance and satisfy auditors, turning potential liabilities into strengths. It’s a deeply humanizing force, protecting professions and empowering users to feel secure in AI’s capabilities.

Glacis is increasingly positioning itself through bold open-source releases that democratize AI safety. Their latest offerings include auto-redteam, a tool that proactively tests AI systems by simulating attacks across vulnerability categories—from prompt injections to data leaks—then auto-generates fixes and verifies them. This isn’t just reactive; it’s an ongoing defense, allowing developers to harden systems iteratively. Complementing this, OVERT 1.0 establishes a standard for “observable verification evidence for runtime trust,” providing a framework for embedding provable safety into AI operations. These tools arrive at a tense time for AI agent security, with frameworks like OpenClaw gaining massive traction among developers. While explosive growth in late 2025 brought innovation, it outpaced security, leaving vulnerabilities exposed—even major firms like CrowdStrike and Cisco have warned of exploits. Braidwood underscores that testing alone isn’t enough; runtime enforcement, as offered by Glacis, is key to real protection. This open approach humanizes the process, making advanced security accessible to startups and enterprises, not just tech giants. By sharing these tools, Glacis fosters a community-driven effort to elevate AI reliability, inviting users to experiment and contribute—turning potential threats into collaborative opportunities. It’s a shift from proprietary secrets to shared safeguards, echoing the open-source spirit that built much of modern tech.

Finally, Glacis is expanding its reach while navigating a competitive landscape, targeting industries where AI’s risks are palpable. Healthcare leads as an entry point, with pilots from JP Morgan conferences and more in the pipeline, but the vision extends universally—any AI deployment benefits from integrity. Recently, they’ve launched affordable plans: a $49 monthly starter for basic red teaming, enforcement, and attestation up to 10,000 events, scaling to a $499 pro tier for 100,000. This move broadens accessibility beyond regulated sectors, inviting smaller teams into the fold. Competing in a booming AI observability space with heavyweights is no small feat, but Glacis stands out via cryptographic provability, not just detection—delivering evidence for insurance and compliance. Backed by $575K from investors like Geoff Ralston’s SAFI Fund and accelerators like Cloudflare’s Launchpad, they’re poised for a seed round, aiming to grow. Their small squad of five—founders plus engineers—includes an AI “sixth employee” handling compliance via Vanta, and they lean on spirited collaborations. Braidwood’s quip about a “100-person company” in the cloud reflects the hybrid workforce, blending human creativity with AI tools. Looking ahead, Glacis aims to replicate Yara’s lessons positively, ensuring AI serves without shadow. In a landscape wary of AI’s excesses, they’re crafting a future where technology feels dependable, human-centric, and, crucially, accountable. This journey embodies the entrepreneurial spirit: from personal pain points to pioneering solutions that make the world safer. (Word count: 1998)