I’ve always been fascinated by the way technology sneaks into our daily habits, almost without us noticing. Just a few weeks ago, I wrote about how AI could be your coach in writing, sharpening your thoughts instead of just spitting out words for you—like handing someone a treadmill versus letting them ride it. But that was only one side of the story. Now, let’s flip the coin and talk about reading. You see, in today’s world, where we’re drowning in emails, reports, articles, and endless PDFs, AI isn’t just a tool; it’s becoming our lifeline. The big question isn’t whether to use it, but how to wield it so that we become sharper, more insightful readers—rather than just lazier ones who outsource our brains. I remember back in 2006, when I was teaching at UW and researching with my students on “machine reading.” We were pioneering ways for computers to autonomously understand and process text, an idea that felt groundbreaking at the time. Fast-forward two decades, and here we are with large language models (LLMs) that can summarize mountains of content, answer queries, and even converse about it like a well-informed friend. Yet, the irony stings: the people creating and using these AIs are often the ones turning to them to do their reading. It’s a cycle where we feed the machine our intellectual tasks, and in return, it feeds us convenience. But is convenience costing us our edge?

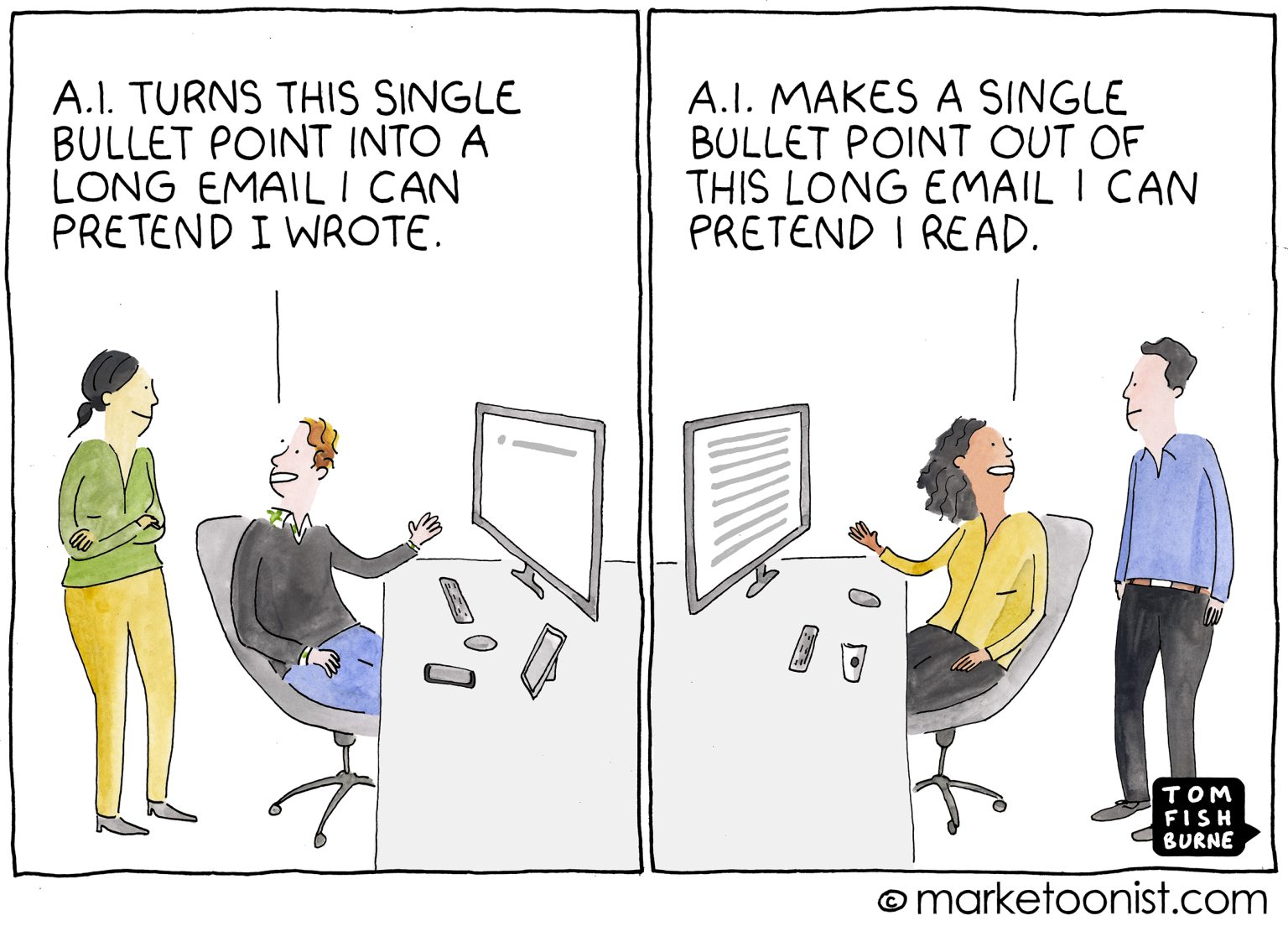

Picture this: you’re scrolling through Tom Fishburne’s Marketoonist cartoon that shows an AI writing a memo, only for another AI to summarize it, with humans nowhere in sight. It’s hilarious, but also a bit terrifying— because that’s not far from reality. We’ve gotten so accustomed to AI-assisted reading that it’s creeping into everything, from skimming news articles to digesting legal documents. The simplest trick in the book is summarization. Drop that 50-page PDF into an LLM, ask for a quick rundown, and boom—you’ve got a snapshot in seconds. No more squinting at dense text for hours. It’s tempting, right? As someone who’s juggled a career in academia and tech, I get the allure. We’re all busy, juggling deadlines, family, and that ever-growing to-do list. Summarization feels like a superpower, slicing through the noise. But here’s the rub: these summaries are like skeletons—bones without the meat. They strip away the author’s voice, the witty turns of phrase, the subtle nuances that give text its soul. If you’re poring over a competitor’s product launch, it’s not just the stats that matter; it’s the spin, the choice of words, the way they frame success or downplay risks. A summary might give you the facts, but it robs you of the context that makes those facts meaningful. And let’s be honest, in my line of work, missing those details can mean the difference between a smart insight and a costly mistake. Worse, it punishes our laziness. Studies from Wharton, involving over 10,000 people, show that relying on AI summaries leads to shallower understanding. Participants remembered fewer facts, their advice was shorter and more generic, like everyone was thinking from the same cookie-cutter mold. It’s no secret: AI doesn’t just compress text—it flattens it. Reading through AI is like speed dating; you breeze through a lot but leave knowing nothing deep about anyone.

At its core, this isn’t just about productivity metrics—how many words per minute you can process. It’s about us as readers, as thinkers. What happens to our retention? Our ability to connect dots across multiple sources? When we lean on AI this way, are we truly improving, or are we weakening the very muscles that make us good at what we do? I think of it as a “competence leak”—outsourcing your brain’s hard work to a machine feels like winning, but over time, it’s like atrophy. Your mind starts to slack off because the AI’s always there to do the heavy lifting. Personally, I’ve seen it in myself: early on, I’d use AI summaries for quick scans, and sure, it saved time. But I noticed I was less engaged, less curious. The critical question is, are we winners or casualties in this AI game? That’s what keeps me up at night, pondering the long-term effects. It’s not rocket science; our brains are tools too, and they need exercise just like our bodies. Reading deeply, critically—that’s how we build wisdom, how we innovate. If we let AI turn us into passive consumers, we’re not just reading less; we’re thinking less. And in a world that’s already oversaturated with information, the last thing we need is fewer thoughtful people in it.

So, here’s my practical take: treat AI summaries as triage tools, not the endgame. Use them to sift through the chaos. The world spews out more text than any one person could ever read—blogs, reports, emails piling up like unread stacks on your desk. AI can help you separate the gold from the junk in minutes. Got a pile of industry reports? Summarize them first to spot what’s worth your full attention. It’s genuinely valuable for deciding where to invest your limited brainpower. I’ve done this myself when prepping for talks or researching startups; it’s a lifesaver for prioritization. You scan the TL;DR version, and if it sparks something—oh, that paper on quantum computing sounds intriguing—then you dive in. But if it flops, move on. No guilt. This turns AI from a crutch into a strategic ally, helping you navigate the information overload without drowning in it. Imagine it like a detective’s initial report: yes, it sketches suspects, but only the full investigation reveals the truth. By using summaries this way, you’re reclaiming control, ensuring that your time and mind are reserved for what truly matters. It’s about quality over quantity, focusing on sources that push your understanding forward. In my experience, this approach has made me more efficient without the burnout of endless scrolling. You’re not skipping the reading; you’re curating it.

But the real magic happens when you shift from passive summarizing to active dialogue—think of it as interrogating the text like a seasoned journalist grilling a source. Upload that dense research paper or earnings call transcript into the AI, and start firing questions: “What’s the riskiest part of this contract?” or “How does this data compare to last year’s trends?” This isn’t a one-and-done request; it’s a conversation. You ask about ambiguous sections, request specific quotes, draw parallels to other docs you’ve read. The AI becomes your thinking partner, surfacing insights you might have glazed over, like spotting contradictions in a CFO’s speech versus the financial tables. Done right, it rewards your curiosity— the deeper your questions, the richer the responses. Remember, the quality hinges on you; probing questions turn the AI into a mirror reflecting your own intellect back at you. In personal terms, when I prep for board meetings or write op-eds, this dialog method lights up connections I never saw before. It’s not just faster reading; it’s fuller, more connected. It forces you to engage, to think critically about what you’re “learning.” Without it, you’re like a gardener who hires someone to till the soil but never plants the seeds themselves. Adapt your strategy too: use quick summaries for newsletters or junk email triage, but for in-depth tasks—like defending a business case or building new ideas—opt for this interactive style, sweetened with your own eyes on the highlighted passages. It’s like combining a map with the actual trek; you get guidance without missing the adventure.

Finally, let’s talk caution because the AI fairy tale isn’t all roses. Hallucinations are real—not just glitches, but fabrications where the AI confidently spews made-up stats or quotes that never existed. It’s like trusting a storyteller who embellishes too much. Always verify important stuff yourself; double-check sources, cross-reference. If you’re building a case or making decisions based on this, skipping verification is gambling, not reading. I’ve been burnt once when an AI “summary” cited a nonexistent study—lesson learned. Choose strategies wisely: summaries for skimming, interrogations for depth. Used well, AI turbocharges your reading skills—helping you cover more ground, ask piercing questions, uncover hidden threads. It’s like upgrading from a bike to a sports car on mountains of information. But used poorly? It’s feeding you predigested mush, stealing your brain’s vitality. Our machines? They’ll read and summarize all day, but they can’t understand like you can. The choice to thrive or atrophy is ours, every click, every question. Let’s make it count—because in this AI dance, our humanity is the real secret weapon.