As a parent in Seattle, I’ve often found myself pondering the digital world my 13-year-old daughter navigates daily. She’s a typical seventh grader at a local public school, glued to her iPhone, chatting with friends, and occasionally ordering snacks via Amazon’s Alexa without a second thought. One evening, over dinner, I casually asked her how much she’d learned or thought about artificial intelligence in school. Her response—”Not at all?”—came back at me like a puzzled echo, her eyebrows furrowing as if questioning my sanity. It struck me then how invisible AI has become in our lives, woven into the fabric of everyday technology like social media algorithms, voice assistants, and even the spell-check in her homework apps. But beneath that invisibility lies a deeper concern: Is she equipped to understand and harness this technology that will shape her future? With AI booming in tech hubs like Silicon Valley and Seattle, I worry about her falling behind, not in some abstract way, but in real, tangible opportunities. Like many parents, I’ve seen how the world is changing—from automated customer service to AI-driven medical diagnoses—and I want Kate to thrive in it, not just consume it passively. This isn’t just about coding genius salaries; it’s about empowerment, about making sure she can critically engage with tools that will define her career, relationships, and even her sense of self. As I delved deeper, I realized we’re all a bit like Kate, skimming the surface ofinos an ocean we barely comprehend, and that’s where the anxiety kicks in.

Kate uses AI more than she realizes, but her formal education on the topic is surprisingly sparse. Beyond a sixth-grade elective where she coded a simple game—her first brush with the logic of if-then statements—she’s not delving into the mechanics of algorithms or neural networks in her current curriculum. It’s funny, living in a city surrounded by tech giants like Amazon and Microsoft, you’d think AI would be a core subject, like math or history. But for most middle schoolers, it’s treated as this mysterious force, a black box you interact with through apps, without ever lifting the lid. I’ve watched her use photo-editing filters that rely on machine learning to smooth out blemishes or generate silly memes, yet she doesn’t question how those tools decide what makes a “perfect” selfie. I’ve interviewed startup founders who gush about AI’s transformative potential, but they also warn that without basics, my daughter might be left behind in contexts beyond schools—think the job interviews or internships where employers expect fluency. It’s not just scarcity in schools; it’s a cultural oversight. We parents enable this by handing over devices loaded with AI without explanation, assuming kids will “figure it out.” But real understanding requires demystification, and that’s where I turned to experts, hoping to bridge the gap for both of us.

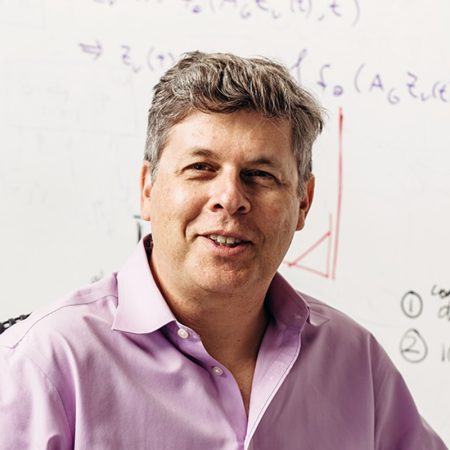

Curious about her prospects, I reached out to Karim Meghji, the new president and CEO of Code.org, a Seattle-based nonprofit that’s been championing computer science education since 2013. He’s not your typical executive; with a decade at RealNetworks and a stint as CTO at Remitly, Meghji brings a blend of tech savvy and practical wisdom to his role. We chatted like two concerned parents rather than in a formal interview, swapping stories about raising kids in an AI-saturated world. When I asked if I should worry about Kate missing out on high-paying roles at places like OpenAI, he downplayed the hype, focusing instead on foundational skills that benefit everyone. Meghji emphasized that Code.org’s shift toward AI-centric curricula has already reached over 6 million students worldwide through resources like their “Hour of AI” activities, teaching basics like what data is and how AI processes it. It’s not just about pushing products, though; he’s genuinely invested in preparing kids for a future where technology isn’t optional. Our conversation felt reassuring, like advice from a mentor who gets the parental juggling act—balancing fear of the unknown with optimism for growth. Meghji shared how Code.org started as a mission by the Partovi brothers to democratize coding, and now it’s evolving to confront AI head-on, ensuring no child is left on the sidelines.

What struck me most was Meghji’s “glass box” philosophy, which flipped my perspective on AI education for middle schoolers. He argues that this age—around seventh grade—is the sweet spot for evolving from basic AI literacy, like knowing what a chatbot is, to true fluency. Picture it like dissecting a frog in biology class: you’re not just memorizing facts; you’re pulling apart the anatomy to understand life. For AI, that means tinkering—getting kids to look under the hood, inspect prompts, data inputs, and output logic, turning that intimidating black box into a transparent glass one. Meghji encourages giving students “screwdrivers and hammers” to experiment, not just consume. In our talk, he remembered his own kids’ confusion with AI, mirroring my experiences with Kate. By middle school, he believes, children are ready for multi-step explorations: challenging an AI model’s reasoning, refining prompts for better results, and understanding why one query yields a poem while another spits out a recipe. This approach fosters criticism over obedience, teaching that AI isn’t infallible. Code.org’s resources emphasize ET this hands-on ethic, building games and websites that integrate AI models directly. It’s empowering, humanizing tech by making it approachable, like learning to cook by experimenting in the kitchen rather than following rigid recipes. For parents like me, it’s a reminder that education isn’t about perfection; it’s about curiosity and iteration, sediment fostering resilience in a world where AI errors, like biased outputs, are as common as they are insidious.

Beyond the technical nuts and bolts, Meghji highlighted the human elements that make AI education vital, regardless of career paths. He pointed out that even “smarter” adults get duped daily by AI-generated content—deepfakes, misinformation, or convincingly fabricated articles—and this trend won’t ebb. For Kate, who adores ceramics over code, it’s about applying AI critically in any field. Meghji stressed ethical considerations: how human factors in design lead to bias, the moral dilemmas of job displacement, or privacy breaches in digital spaces. As creators and workers, we must navigate these, learning to collaborate digitally. This ties into broader life skills—communication, reflection, teamwork—that transcend disciplines. Imagine Kate as a future doctor using AI for diagnostics; without understanding its flaws, she risks misdiagnosis. Or as an artist, leveraging tools to enhance creativity without losing originality. Meghji’s insights resonated deeply; he’s seen startups fail from ignoring ethics, and he advocates for curricula that blend tech with sociology, like discussing fairness in algorithms. Transitions from “low literacy” interactions—one-off prompts—to deep dialogues where students guide AI as a partner. It’s not about making Everyone a coder, but empowering them to bendPOS tech to their will, improving work and lives. For parents, this means encouraging curiosity, not pressure, helping kids see AI as a tool for empathy and innovation, not just profit.

Ultimately, Meghji’s advice boiled down to practical starting points for introducing kids to AI in low-stress ways, tackling my reservations head-on. I’m cautious about pushing tech on Kate—I’ve seen the dark sides, like addiction or misinformation—and this guided me to be more intentional. He suggested family experiments: Grab devices and tinker with AI tools for text, images, or videos together, framing it as play, not instruction. Find what excites her—perhaps generating story ideas based on her ceramics projects—and add parental guardrails, discussing responsible use without spoiling fun. Advocacy is key; parents like me can push schools for curricula like Code.org’s “Computer Science Discoveries,” introducing middle schoolers to building with AI in fun, game-based ways. Embrace the “tinkering age” on platforms like Scratch, keeping skills sharp through playful coding or app-building. Meghji emphasized evolving from users to builders, ensuring kids develop a “maker mindset” applicable to welding, research, or any passion. As we wrapped up, I felt hopeful, armed with strategies that treat AI as a shared adventure. For families across the country, this means injecting humanity into tech education—encouraging exploration that builds confidence, ethics, and adaptability. In a rapidly AI-driven world, it’s not just about catching up; it’s about humanizing the experience, one family chat—one dissection of the digital frog—at a time.

Reflecting on our drive home from that conversation, I realized Meghji’s perspectives have shifted how I approach tech with Kate. It’s no longer about forcing interest in STEM but sparking wonder through relatable, ethical discussions. For instance, when she uses AI for homework help, we now unpack how it might shortcut learning or carry biases, turning passive use into active critique. This humanizes the process, making AI feel collaborative, not controlling. We’ve started family AI sessions—creating silly videos or debating AI-generated art—all under the premise of fun over feasibility. Meghji’s tips inspire me to advocate more at her school, proposing workshops that blend her love for crafting with digital tools. It’s about long-term empowerment, ensuring she can innovate with intention. In elevating these skills, we’re not just prepping for careers; we’re fostering empathy in an AI world, where technology amplifies human potential. As parents, our role is to guide, question, and ultimately humanize, transforming black boxes into glass opportunities for the next generation.

Emphasizing Meghji’s “tinkering age” mantra, I’ve seen Kate’s enthusiasm grow through simple experiments. For example, using code platforms to animate her pottery designs activates her creativity without overwhelm. This hands-on ethic builds resilience, teaching that mistakes in AI outputs are chances to learn, not failures. Broader impacts shine through—visions of her as a doctor refining AI diagnostics or a biological scientist integrating tech ethically. Meghji’s ethics reminder is poignant; we’ve discussed real cases, like biased hiring algorithms, to make abstract concepts personal. Skills like collaboration shine in group AI projects we try at home, mirroring real-world teamwork. Victory for parents is in balance—nurturing curiosity while setting boundaries, ensuring AI enriches, not overwhelms. In conclusion, Meghji’s insights inspire a balanced approach, turning AI education into an inclusive, human venture for all kids, regardless of path.