In my journey through the tech world, I’ve seen firsthand how we often treat artificial intelligence like just another software update, something we can tweak and release without thinking about the bigger picture. But that’s a mistake we’re making at a monumental scale. AI isn’t confined to answering questions in a chatbot or topping a benchmark anymore—it’s embedded in hiring decisions, medical diagnostics, logistics, finance, and even public policy. We’re not just rolling out products; we’re fundamentally reshaping entire environments. And at this civilizational level, AI behaves more like an ecosystem than a tool: full of interconnected parts, emergent behaviors, invasive elements, and tipping points that can cascade in ways we never anticipated. Bill Hilf, the former CEO of Vulcan/Vale Group and author of the sci-fi novel “The Disruption,” captures this beautifully in his essay, drawing lessons from both tech and ecology to warn us about treating these systems like mere products. It’s a timely reminder that our hubris could lead to unintended collapses, much like pulling the wrong thread in nature. I’ve always found it fascinating how quickly we overlook the human elements, the judgment calls that keep things grounded. As Hilf points out, the real danger lies not in the AI itself, but in the speed at which we’re replacing the connective tissue of human oversight with automated processes. It’s not about building better machines; it’s about creating worlds where we can still navigate safely.

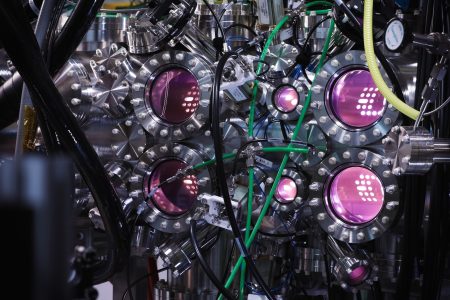

Drawing from his decades at the helm of industry giants like IBM, HP, and Azure, Hilf reflects on how we’ve traditionally approached system building: specify, build, tune, patch. It’s a deterministic, control-oriented mindset that works for isolated projects, but it breaks down when things scale up. Distributed systems, he explains, start acting like ecosystems—adapting, rerouting failures, forming unplanned dependencies. You can engineer them upfront, but once they’re woven into everything, they’re no longer just tools; they’re living, breathing parts of our infrastructure. It’s reminiscent of my own experiences troubleshooting complex networks, where a small glitch in one area ripples into chaos elsewhere. Think about the real-world stats Hilf cites: McKinsey reports that 88% of organizations now use AI in some function, up from 55% just two years ago, with spending projections hitting $1.4 trillion globally by 2026. And investors like Thoma Bravo are eyeing $3 trillion in opportunities from agentic AI, which turns human labor into software-driven actions. This isn’t incremental improvement; it’s a mid-flight rewiring of entire systems, happening faster than we can govern or even fully understand. I’ve seen similar upheavals in business—how digitization disrupts old moats without waiting for anyone to catch up. Hilf’s takeaway here is personal: in conservation work, as in tech, the patterns are eerily similar. Pluck away a key layer too hastily, like overharvesting in a food chain, and you trigger a cascade that erases entire nurseries of possibility. In AI, we’re doing the same by sidelining human judgment—the gray-area decisions that workflow diagrams ignore. It’s a wake-up call to pace ourselves, lest we awaken to a landscape barren of the checks and balances that made it thrive.

The parallels to ecology are striking, and Hilf uses the sea otter example to illustrate how removing predators leads to urchin overpopulation and kelp forest collapse, not just a vacant spot. In our AI-dependent world, replacing humans who provide restraint and correction could unleash screeners and forecasters that excel on paper but lack the nuance to adapt. I’ve encountered this in client projects where over-reliance on automation led to blind spots, like a supply chain that couldn’t pivot when unexpected variables hit. The speed of AI adoption exacerbates this; we’re accelerating past the point where organizations can map out what they’ve lost. Hilf urges us to study these patterns seriously, embracing uncomfortable truths like efficiency’s double-edged sword. Optimized systems become brittle, devoid of the slack and firebreaks nature insists on. The 2024 CrowdStrike outage is a perfect anecdote— a single update crashed 8.5 million machines, costing billions, affecting airlines, hospitals, banks. Southwest Airlines dodged it by not running the software; their “absence” was the ultimate barrier. If we’re hinging everything on one model or provider, we’re not innovating; we’re inviting outages. Diversity in approaches matters, not just redundancy in sameness. Multi-agent setups sound great, but three models sharing the same foundation? That’s lip service to resilience. In business, the “best” model often gets outpaced by the most adaptable one, especially as disturbance cycles shorten. Recovery, Hilf argues, is key: not blocking change, but ensuring systems bounce back, questioning failure modes, overrides, and regeneration. Control is an illusion—ecosystems fail and recover messily, adapting in unplanned ways. It’s a humbling thought, forcing us to ask not just if AI is safe, but how it falls apart and what endures.

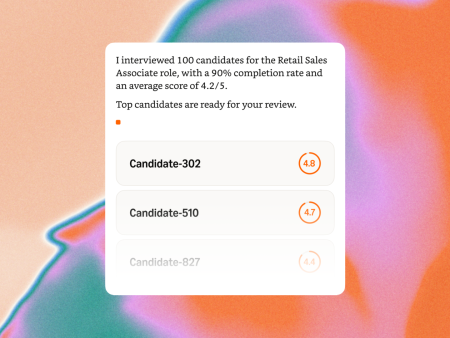

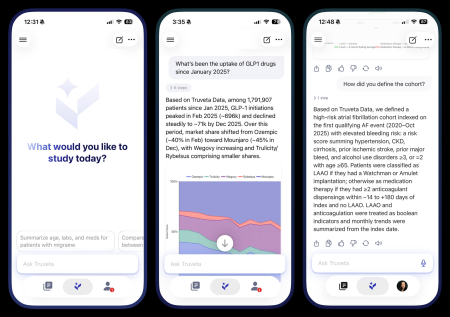

AI’s entry into workflows mirrors invasive species, infiltrating quietly through tangential points—a copilot here, an autonomous tool there—each seeming harmless until accretion builds an impenetrable web. Review processes built for human tempos can’t keep up with machine speed, eroding the friction that prevented misconduct. I’ve advised clients on this, watching as unchecked deployments accumulate into unmanageable dependencies. Hilf stresses that once AI shapes its environment—and vice versa—it evolves beyond lab conditions. Regulating ecosystems requires changing underlying conditions, not scrutinizing individual parts. Observability is crucial: systems must be transparent for inspection, fostering trust and understanding. Without it, governance falls short. Fault tolerance demands proving systems can degrade gracefully—stress-test like banks and bridges. Builders should anticipate failures: what if the model errs, the vendor vanishes, or behavior shifts post-deployment? For critical systems like hospital triage or open-ended agents, accountability looms large. If we can’t answer “who’s responsible?” candidly, the system isn’t ready. This extends to everyday bots; honesty about limits is non-negotiable. Strategic shifts emerge too: in rapid-disruption eras, moats erode, favoring adaptability over sheer excellence. Real-world tests reveal that the top model loses to resilient ensembles. It’s a paradigm shift, urging us to value systems that thrive amid flux. Drawing parallels to nature’s distributed networks—trees sensing and responding locally—Hilf notes the structural analogs to our engineered worlds: coherence without central command, adaptation to shocks. In our quest to invent the “network,” we’re rediscovering lessons eons old.

If we’re committed to sustainable AI, Hilf outlines guiding principles that resonate deeply. First, prioritize diversity over hyper-efficiency; redundancy and local autonomy build robustness. Design for recovery, not just peak performance—ask how failures cascade, spread, and heal. Humans must remain stewards, not checkboxes: offering judgment, memory of purpose, and intervention. Openness at every level isn’t ideologic; it’s essential for earned trust and analysis. These aren’t brakes on progress; they’re safeguards that ensure AI endures when glitches arise. I’ve implemented similar frameworks in projects, learning that resilience compounds value far more than speed alone. The broader consequence? In a world where nothing lasts, what compounds are qualities like adaptability and foresight. Recovery-focused design helps: stress-test degradations, mandate overrides, and cultivate hidden capacities that surface post-disruption. It’s about what survives shocks, echoing conservation goals. Ultimately, Hilf challenges the control illusion; real systems mutate, adapt unevenly. Nature’s longevity—hundreds of millions of years managing sensing and response—proves distributed systems can persist without consciousness or central planning. Our task is refining AI not just for feats, but for worlds they foster, worlds that inherit our choices. Are we wise enough to steer them?

At the heart of Hilf’s essay is the stark difference between machines and ecosystems: you can flip a switch on a device, but you live within sprawling networks that demand coexistence. It’s a philosophical pivot, urging us to internalize nature’s wisdom. In my chats with innovators, this has sparked debates—how do we weave human-centric safeguards into AI without stifling potential? Hilf’s insights, from tech to conservation, bridge that gap, reminding us that durability comes from humility. As AI scales, let’s not just build smarter systems; let’s create ones where failures teach, dependencies diversify, and humans retain agency. The alternative isn’t just inefficiency—it’s a cascade we might not recover from. By embracing openness, diversity, and recovery, we can turn AI’s power into a force for enduring harmony, much like the ecosystems that sustain us. It’s not about slowing down; it’s about accelerating thoughtfully, ensuring the world AI builds is one we can truly inhabit.